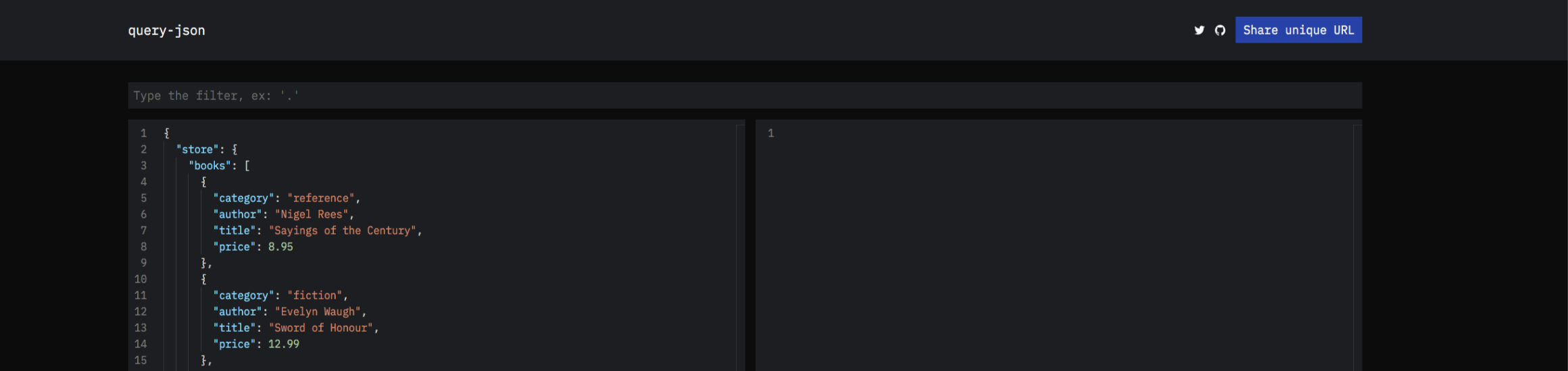

query-json is a faster and simpler re-implementation of jq's language in Reason, compiled to a native binary and to a JavaScript library.

It's a CLI to run small programs against JSON, the same idea as sed but for JSON files. As a web engineer is an essential tool while developing APIs.

Started with the goal to create something useful and learn during the process. Was very interested in learning about how to write parsers and compilers using the OCaml stack: menhir and sedlex, compile it to any architecture and try to compile it to JavaScript.

This post explains the project, how it was made and which decisions were followed and some reflections.

Why learning parsers/compilers

I had a little idea about the theory, but very vague and useless. Was the right time to learn more since I made styled-ppx. Which is a ppx (PreProcessor Extension) that allows CSS-in-Reason/OCaml. Needs to parse the CSS and have a backend that compiles to bs-emotion.

I asked for some help to @EduardoRFS about writing a CSS Parser that supports the entire CSS3 specification which I will want to understand, improve and maintain over time.

How it works

query-json ".store.books | filter(.price > 10)" stores.jsonquery-json ".store.books | filter(.price > 10)" stores.jsonThis reads stores.json and access to "store" field, access to "books" field (since it's an array) it will run a filter on each item and filter each item by it's "price" that's larger than 10 and finally, print the resultant list.

[

{

"title": "War and Peace",

"author": "Leo Tolstoy",

"price": 12.0

},

{

"title": "Lolita",

"author": "Vladimir Nabokov",

"price": 13.0

}

][

{

"title": "War and Peace",

"author": "Leo Tolstoy",

"price": 12.0

},

{

"title": "Lolita",

"author": "Vladimir Nabokov",

"price": 13.0

}

]The first argument is called query and it's a jq expression. The second one is called json or payload, and it's a valid JSON file.

The semantics of jq consist of a set of piped operations, where each output is connected to an input where the first input it's the JSON itself. Some pseudo-code to illustate:

{ /* json */ } | filter | transform | count

In order to transform the query to a set of operations that run against a JSON, we will divide the problem into 3 steps: parse, compile and run.

Parsing

Responsible for transforming a string into an AST(Abstract Syntax Tree) and provide a good error message when the input is malformed.

One of the beauties of jq is that all the expressions are piped by default, so .store | .books is equivalent to .store.books. I designed the AST to represent the pipe structure in its nature. If you want to know more about jq's language, check their wiki.

Let's dive into an example. When the parser recieves .store.books will return:

Pipe(Key("store"), Key("books"));Pipe(Key("store"), Key("books"));All the operations are transformed into these constructors from above (Pipe, Key). Those constructors are called Variants.

Variants models values that may assume one of many known variations. This feature is similar to enums in other languages, but each variant may optionally contain data that is carried inside. Variants belong to a large group of types called ADTs.

The entire query-json program representation it's one big recursive Variant.

Following with a more complex example, let's parse .store.books | filter(.price > 10):

Pipe(

Pipe(Key("store"), Key("books")),

Filter(Pipe(Key("price"), Literal(Number(10))))

);Pipe(

Pipe(Key("store"), Key("books")),

Filter(Pipe(Key("price"), Literal(Number(10))))

);You can see more examples in the parsing tests

Compiling

The compilation step recieves the AST expression and transforms it to code. It's a big recursive pattern match, which is another great feature of Reason and looks something like this:

let rec compile = (expression, json) => {

switch (expression) {

| Empty => empty

| Keys => keys(json)

| Key(key, opt) => member(key, opt, json)

| Index(idx) => index(idx, json)

| Head => head(json)

| Tail => tail(json)

| Length => length(json)

/* [...] */

}let rec compile = (expression, json) => {

switch (expression) {

| Empty => empty

| Keys => keys(json)

| Key(key, opt) => member(key, opt, json)

| Index(idx) => index(idx, json)

| Head => head(json)

| Tail => tail(json)

| Length => length(json)

/* [...] */

}On the left side are defined all the possible Variants and on the right side the operations. Those operations transforms the JSON. Here is where map, filter, reduce, index, etc... are implemented.

Running

The easier part, the compile step give us back a curried function that expects a json as the only argument, we just apply the function to this JSON and print the result.

This example only describes the happy path, in reality, the parsing and compilation steps return a Result type which allows handling errors and report them when matter.

Distribution

Now we already covered how it works internally and a little overview of the architecture. Now let's dive on how developers can use it in their machines.

How to do cross-compilation to binary

query-json is builded with dune, which supports out of the box ReasonML. dune runs the OCaml compiler to compile to binary code. It can compile to bytecode (and runned with the bytecode interpreter). I choose to compile to binary to make a fast native single executable.

All of the build process and their tests runs on our CI in Github Actions, which allows running Mac, Windows and Linux images. I distribute the pre-builded binaries for all architectures in the Github Releases and the npm registry.

Allowing npm users to download it with the CLI, and others to download from Github release page.

How to compile to the Web

Apart from compiling to the executable, query-json is compiled to JavaScript as well.

The compilation to JavaScript is the sweet section of this blog post and the part I'm most proud about. It's not my crucial effor to do so, but being capable of releasing 2 distributables from a single codebase seems like magic, including all query-json's dependencies (menhir, sedlex and yojson).

To accomplish this, I'm using js_of_ocaml (jsoo for short). jsoo is a compiler that uses a intermediate representation of the OCaml compiler (the bytecode I mention before) and transforms to JavaScript.

Since dune supports jsoo out of the box, I just needed to modify it's stanza adding (modes js):

(executable

(name Js)

(modes js)

(libraries console.lib source yojson js_of_ocaml)

)(executable

(name Js)

(modes js)

(libraries console.lib source yojson js_of_ocaml)

)Made query-json's playground

After compiling to a JavaScript artefact I could run it syncronsly in the browser. I made a playground to teach people how to use it without the instalation of a binary. Aside from the accessibility for users, It helped me test new versions on each pull request.

I build a tiny website using jsoo and a few cool dependencies: jsoo-react and jsoo-css. You can try it yourself here:

https://query-json.netlify.app

The query-json computation runs on each key-stroke, which means that the playground is offline-ready. If you compare it with the official jq playground where it needs to communicate with a backend and run jq somehow there, and come back with a response.

Having a playground as a serverless frontend app It's a massive improvement over backend-dependant ones. Actually, most of the REPL's from Reason, OCaml, Flow, ReScript (all written in OCaml) uses js_of_ocaml for their playgrounds.

Benefits

Using jsoo seems a powerful way to run your OCaml code in a browser without much hassle. This is a key take away for me while making this project possible. But not only the distribution is a beneift, here's a list of other upsides in my opinion:

- Portability, moving code from server to client or viceversa, sharing marshal/unmarshal code, easier contract testing.

- Familiarity: Writing the same patterns would benefit new comers that need to learn less platform-specific rules.

- Usage of OCaml's ecosystem: Access to many libraries and ppxs and latest ocaml's features.

- Features that weren't possible: Some apps might benefit from Server-side rendering, others might benefit from moving stuff to offline, and many app-specific designs that are unblocked by this.

Future

The future of query-json is to provide a better experience in running operations on top of JSON.

Providing better error messages and better performance has been the unique goal.

For me jq it's like a double-edged sword, it's very powerful but confusing. The amount of questions of StackOverflow proves that there's a lot of problems without a solution on the language/compiler. If query-json gets a lot of traction I would diverge from the jq syntax and try to solve those confusing parts.

The other mission of query-json is to push performance forward, now we are implementing most of the missing functionality since I first release it and next is to explore performance optimizations, such as:

- Replacing menhir with a hand-written parser and lexer

- Refactor it using OCaml multicore (once it's published!)

- Improving the JSON parsing. There's a few techniques to enable streaming or partial JSON parsing

Final

Hope you liked the project and the story, let me know if you are interested in those topics I'm always happy to chat and help.

Thanks everyone how reviewed this blog post, Javi, Enric and Gerard.

Thanks for reaching the end. Let me know if you have any feedback, corrections or questions. Always happy to chat.

If you like it enough, consider to

share it on Twitter